Universität Bielefeld › Technische Fakultät › NI

Search

programm

Program

9:15-9:30 Welcome

9:30-10:00 Prof. Gerald Loeb (University of Southern California, U.S.A) (confirmed)

10:00-10:30 Prof. Veronica Santos (UCLA, U.S.A) (confirmed)

10:30-10:50 Poster teasers

10:50-11:20 Coffee break and poster session

11:20-11:50 Dr. Lorenzo Natale (iCub Facility, IIT, Italy) (confirmed)

11:50-12:20 Prof. Jan Peters (TU Darmstadt and MPI for Intelligent Systems,

Germany) (confirmed)

12:20-12:50 Dr. Serena Ivaldi (INRIA Nancy Grand-Est ,France) (confirmed)

12:50-14:00 lunch break

14:00-14:30 Dr. Robert Haschke (AGNI, Bielefeld University, Germany) (confirmed)

14:30-15:00 Prof. Tamim Asfour (KIT, Germany) (confirmed)

15:00-15:30 Prof. Patrick Van Der Smagt (TUM, Germany) (confirmed)

15:30-16:00 coffee break

16:00-16:30 Prof. Gordon Cheng (TUM, Germany) (confirmed)

16:30-17:00 Prof. Charles C. Kemp (Georgia Tech, U.S.A) (confirmed)

17:00-17:30 Round table discussion and closing

Poster contribution

Shared Video

https://www.youtube.com/watch?v=QMzcKJtNkxA https://www.youtube.com/watch?v=CmeDWcjeOxA

Speakers & Panel Members Details

Gerald Loeb (M’98) received a B.A. (’69) and M.D. (’72) from Johns Hopkins University and did one year of surgical residency at the University of Arizona before joining the Laboratory of Neural Control at the National Institutes of Health (1973-1988). He was Professor of Physiology and Biomedical Engineering at Queen’s University in Kingston, Canada (1988-1999) and is now Professor of Biomedical Engineeringand Director of the Medical Device Development Facility (MDDF) at the University of Southern California (http://mddf.usc.edu). Dr. Loeb was one of the original developers of the cochlear implant to restore hearing to the deaf and was Chief Scientist for Advanced Bionics Corp. (1994-1999), manufacturers of the ClarionÒ cochlear implant. He is a Fellow of the American Institute of Medical and Biological Engineers, holder of 64 issued US patents and author of over 250 scientific papers (available from http://bme.usc.edu/gloeb).

Gerald Loeb (M’98) received a B.A. (’69) and M.D. (’72) from Johns Hopkins University and did one year of surgical residency at the University of Arizona before joining the Laboratory of Neural Control at the National Institutes of Health (1973-1988). He was Professor of Physiology and Biomedical Engineering at Queen’s University in Kingston, Canada (1988-1999) and is now Professor of Biomedical Engineeringand Director of the Medical Device Development Facility (MDDF) at the University of Southern California (http://mddf.usc.edu). Dr. Loeb was one of the original developers of the cochlear implant to restore hearing to the deaf and was Chief Scientist for Advanced Bionics Corp. (1994-1999), manufacturers of the ClarionÒ cochlear implant. He is a Fellow of the American Institute of Medical and Biological Engineers, holder of 64 issued US patents and author of over 250 scientific papers (available from http://bme.usc.edu/gloeb).Most of Dr. Loeb’s current research is directed toward sensorimotorcontrol of paralyzed and prosthetic limbs. His MDDF research teamdeveloped BION™ injectable neuromuscular stimulators and proved their safety and efficacy in several pilot clinical trials. Dr. Loeb is Chief Scientist for General Stim Inc., which is developing a new product based on this concept. The MDDF is using related technology to develop a cardiac micropacemaker to be deployed in a fetus in utero. The MDDF developedthe BioTac®, a biomimetic tactile sensor for robotic and prosthetic hands, now commercialized through a spin-off company for which Dr. Loeb is Chief Executive Officer (www.SynTouchLLC.com). Dr. Loeb’s academic research at USC includes computer models of musculoskeletal mechanics and the interneuronal circuitry of the spinal cord, which facilitates control and learning of voluntary motor behaviors by the brain. These projects build on Dr. Loeb’sprior physiological research into the properties and natural activities of muscles, motoneurons, proprioceptors and spinal reflexes.

Title:

Machine Touch for Dexterous Robotic and Prosthetic Hands

Abstract:

Anyone who has ever had fingers numb from the cold knows that dexterity is impossible without tactile feedback, so industrial robots and prosthetic hands have been fundamentally limited. Robots do not function well in poorly controlled environments or with unfamiliar objects, making them slow, unreliable and often unsafe to operate alongside human workers. In order to achieve speed and precision, robots tend to be rigid, heavy and expensive, while human workers rely successfully on soft, sloppy muscles supported by tactile sensing and reflexes to compensate for errors. We have applied biomimetic design – copying the principles used by Mother Nature – to develop the BioTac, a tactile sensor that has the same mechanical properties and sensing modalities as the human fingertip. When combined with biomimetic algorithms for motion control and tactile perception, this technology can be applied to a wide range of jobs that robots now perform poorly or not at all. We have used a simple version of an inhibitory spinal reflex to enable users of a myoelectric prosthetic hand to handle delicate objects without crushing them. We have developed an optimal exploration strategy whereby a robot can identify objects by their tactile properties. Other researchers have combined the multimodal signals from the BioTac in neural networks that learn to perform complex tasks. Analogous to the early days of machine vision after the invention of the video camera, the next phase of Machine Touch can now focus on signal analysis rather than signal acquisition.

Veronica J. Santos is an Associate Professor in the Mechanical and Aerospace Engineering Department at the University of California, Los Angeles, and Director of the UCLA Biomechatronics Lab (http://BiomechatronicsLab.ucla.edu). Dr. Santos received her B.S. in mechanical engineering with a music minor from the University of California at Berkeley (1999), was a Quality and R&D Engineer at Guidant Corporation, and received her M.S. and Ph.D. in mechanical engineering with a biometry minor from Cornell University (2007). While a postdoc at the University of Southern California, she contributed to the development of a biomimetic tactile sensor for prosthetic hands. From 2008 to 2014, Dr. Santos was an Assistant Professor in the Mechanical and Aerospace Engineering Program at Arizona State University. Her research interests include human hand biomechanics, human-machine systems, haptics, tactile sensors, machine perception, prosthetics, and robotics for grasp and manipulation. Dr. Santos was selected for an NSF CAREER Award (2010), two ASU Engineering Top 5% Teaching Awards (2012, 2013), an ASU Young Investigator Award (2014), and as an NAE Frontiers of Engineering Education Symposium participant (2010).

Veronica J. Santos is an Associate Professor in the Mechanical and Aerospace Engineering Department at the University of California, Los Angeles, and Director of the UCLA Biomechatronics Lab (http://BiomechatronicsLab.ucla.edu). Dr. Santos received her B.S. in mechanical engineering with a music minor from the University of California at Berkeley (1999), was a Quality and R&D Engineer at Guidant Corporation, and received her M.S. and Ph.D. in mechanical engineering with a biometry minor from Cornell University (2007). While a postdoc at the University of Southern California, she contributed to the development of a biomimetic tactile sensor for prosthetic hands. From 2008 to 2014, Dr. Santos was an Assistant Professor in the Mechanical and Aerospace Engineering Program at Arizona State University. Her research interests include human hand biomechanics, human-machine systems, haptics, tactile sensors, machine perception, prosthetics, and robotics for grasp and manipulation. Dr. Santos was selected for an NSF CAREER Award (2010), two ASU Engineering Top 5% Teaching Awards (2012, 2013), an ASU Young Investigator Award (2014), and as an NAE Frontiers of Engineering Education Symposium participant (2010).

Title:

Abstract:

Many everyday tasks involve hand-object interactions that are visually occluded. In such situations, manipulation can often be accomplished with tactile and proprioceptive feedback. For robots, this requires tactile sensor hardware that can encode task-relevant features. It also requires techniques for transforming low-level tactile sensor data into high-level percepts for task-driven decision-making and learning. I will present a recent study in which a robot learned how to perform a functional contour-following task: the closure of a ziplock bag. This task is challenging for a robot because the bag is deformable, transparent, and visually occluded by artificial fingertip sensors that are also compliant. A Contextual Multi-Armed Bandit reinforcement learning algorithm was implemented to efficiently explore the state-action space while learning a hard-to-code task. As robots are increasingly used to perform complex, physically interactive tasks in unstructured (or unmodeled) environments, it becomes increasingly important to develop methods that enable efficient and effective learning with limited resources such as hardware life and researcher time. Time allowing, I will also present preliminary efforts to develop haptic perception capabilities for tactile sensors operating within granular media.

Lorenzo Natale received his degree in Electronic Engineering (with honours) in 2000 and Ph.D. in Robotics in 2004 from the University of Genoa. He was later postdoctoral researcher at the MIT Computer Science and Artificial Intelligence Laboratory. He was invited professor at the University of Genova where he taught the courses of Natural and Artificial Systems and Antropomorphic Robotics for students of the Bioengineering curriculum. At the moment he is Tenure-Track Researcher at the IIT.

Lorenzo Natale received his degree in Electronic Engineering (with honours) in 2000 and Ph.D. in Robotics in 2004 from the University of Genoa. He was later postdoctoral researcher at the MIT Computer Science and Artificial Intelligence Laboratory. He was invited professor at the University of Genova where he taught the courses of Natural and Artificial Systems and Antropomorphic Robotics for students of the Bioengineering curriculum. At the moment he is Tenure-Track Researcher at the IIT.

In the past ten years Lorenzo Natale worked on various humanoid platforms. He was one of the main contributors to the design and development of the iCub platform and he has been leading the development of the iCub software architecture and the YARP middleware. His research interests range from vision and tactile sensing to software architectures for robotics. He has contributed as co-PI in several EU funded projects (CHRIS, Walkman, Xperience, TACMAN, KOROIBOT and WYSIWYD) and he is author of about 100 papers in international peer-reviewed journal and conferences. He served as Program Chair of ICDL-Epirob 2014 and has been associate editor of international conferences (RO-MAN, ICDL-Epirob, Humanoids, ICRA) and journals (IJHR, IJARS and the Humanoid Robotics specialty of frontiers in Robotics and AI).

Title:

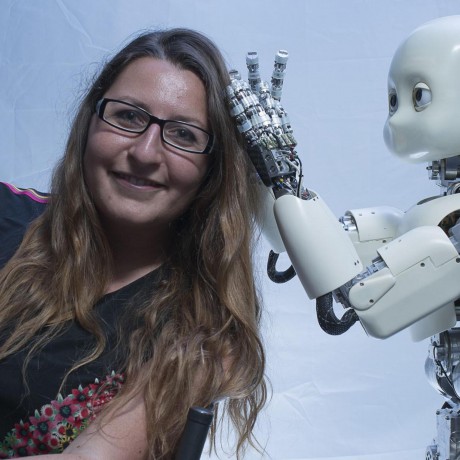

Tactile control and perception on the iCub robot

Abstract:

The iCub is a humanoid robot shaped as a four years old child. It is equipped with 53 motors which actuate the degrees of freedom of the head, arms and legs. In the past few years we have worked to develop the iCub with a system of tactile sensors. This "robot skin" consists of approximately 4500 sensors that are distributed in the hands, arms, torso and legs. In my talk I will illustrate how these sensors have been used to control the interaction between the robot and the environment. I will show examples of whole-body control using vision and touch, tactile driven object manipulation and object perception.

Jan Peters is a full professor (W3) for Intelligent Autonomous Systems at the Computer Science Department of the Technische Universitaet Darmstadt and at the same time a senior research

Jan Peters is a full professor (W3) for Intelligent Autonomous Systems at the Computer Science Department of the Technische Universitaet Darmstadt and at the same time a senior research

scientist and group leader at the Max-Planck Institute for Intelligent Systems, where he heads the interdepartmental Robot Learning Group. Jan Peters has received the Dick Volz Best 2007 US PhD

Thesis Runner-Up Award, the Robotics: Science & Systems - Early Career Spotlight, the INNS Young Investigator Award, and the IEEE Robotics & Automation Society‘s Early Career Award as well as sixteen best paper awards, most recently, the IEEE Brain Initiative Best Paper Award. In 2015, he was awarded an ERC Starting Grant.

Jan Peters has studied Computer Science, Electrical, Mechanical and Control Engineering at TU Munich and FernUni Hagen in Germany, at the National University of Singapore (NUS) and the University of Southern California (USC). He has received four Master‘s degrees in these disciplines as well as a Computer Science PhD from USC.

Title:

Learning for Tactile Manipulation

Abstract:

Due to the recent advent of the required technology to bring tactile sensing into manipulation, we may finally take robots out of well-controlled environments and place them into scenarios impossible without the sense of touch. To accomplish this goal, the key problem of developing an information processing and control methodology enabling robot hands to exploit such novel tactile sensitivity needs to be addressed in order to make robot hands as dexterous as human hands. We aim at endowing robot hands with the core ability of learning to make substantial use of

the data from tactile sensors during manipulation, i.e., generate actions based on the information obtained through the feeling in the human’s fingers.

During this talk, we will show our attempts to close performance gap in the ability to learn manipulation by making such tactile sensory more comprehensible, and use the

information provided by such sensors intelligently for behavior generation. We focus on four core questions: (i) How can we learn to predict from tactile signals? (ii) How can we efficiently explore our environment through touch? (iii) How can we control the interaction with the environment and can stabilize an object by single- or multifinger gripping? (iv) How can we learn to manipulate an object in hand? We will showcase multiple scenarios on different robot hands employing a single finger to complex multi-fingered manipulation.

Serena Ivaldi is a permanent researcher in INRIA. She received the B.S. and M.S. degree in Computer Engineering, both with highest honors, at the University of Genoa (Italy) and the PhD in Humanoid Technologies in 2011, jointly at the University of Genoa and the Italian Institute of Technology. There she also held a research fellowship in the Robotics, Brain and Cognitive Sciences Department, working on the development of the humanoid iCub. She was a postdoctoral researcher in the Institute of Intelligent Systems and Robotics (ISIR) in University Pierre et Marie Curie, Paris, then in the Intelligent Autonomous Systems Laboratory of the Technical University of Darmstadt, Germany. Since November 2014, she is a researcher in INRIA Nancy Grand-Est, in the robotics team Larsen. She has been PI in the European FP7 Project CODYCO (2013-2017) and in the European H2020 project AnDy (2017-2020). Her research is focused on robots interacting physically and socially with humans, blending learning, dynamics estimation, perception and control.

Serena Ivaldi is a permanent researcher in INRIA. She received the B.S. and M.S. degree in Computer Engineering, both with highest honors, at the University of Genoa (Italy) and the PhD in Humanoid Technologies in 2011, jointly at the University of Genoa and the Italian Institute of Technology. There she also held a research fellowship in the Robotics, Brain and Cognitive Sciences Department, working on the development of the humanoid iCub. She was a postdoctoral researcher in the Institute of Intelligent Systems and Robotics (ISIR) in University Pierre et Marie Curie, Paris, then in the Intelligent Autonomous Systems Laboratory of the Technical University of Darmstadt, Germany. Since November 2014, she is a researcher in INRIA Nancy Grand-Est, in the robotics team Larsen. She has been PI in the European FP7 Project CODYCO (2013-2017) and in the European H2020 project AnDy (2017-2020). Her research is focused on robots interacting physically and socially with humans, blending learning, dynamics estimation, perception and control.

Title:

Exploiting tactile information for whole-body dynamics, learning and human-robot interaction

Robert Haschke received the diploma and PhD in Computer Science from the University of Bielefeld, Germany, in 1999 and 2004, working on the theoretical analysis of oscillating recurrent neural networks. Robert is currently heading the Robotics Group within the Neuroinformatics Group, working on a bimanual robot setup for interactive learning. His fields of research include recurrent neural networks, cognitive bimanual robotics, grasping and manipulation with multifingered dextrous hands, tactile sensing, and software integration.

Robert Haschke received the diploma and PhD in Computer Science from the University of Bielefeld, Germany, in 1999 and 2004, working on the theoretical analysis of oscillating recurrent neural networks. Robert is currently heading the Robotics Group within the Neuroinformatics Group, working on a bimanual robot setup for interactive learning. His fields of research include recurrent neural networks, cognitive bimanual robotics, grasping and manipulation with multifingered dextrous hands, tactile sensing, and software integration.

Title:

Tactile Servoing – Tactile-Based Robot Control

Abstract:

Modern Tactile Sensor arrays provide contact force information at reasonable frame rates suitable for robot control. Using machine learning technique like deep learning, we can learn to detect incipient slippage, to control grasping forces, and to explore object surfaces – all without knowing the shape, weight or friction properties of the object.

Tamim Asfour is full Professor at the Institute for Anthropomatics and Robotics, Karlsruhe Institute of Technology (KIT) where he holds the chair of Humanoid Robotics Systems and is head of the High Performance Humanoid Technologies Lab (H2T). His current research interest is high performance 24/7 humanoid robotics. Specifically, his research focuses on engineering humanoid robot systems with the capabilities of predicting, acting and learning from human demonstration and sensorimotor experience. He is developer of the ARMAR humanoid robot family and is leader of the humanoid research group at KIT since 2003.

Title:

Bootstrapping Object Exploration and Dexterous Manipulation

Abstract:

Exploiting interaction with the environment is a powerful way to enhance robots’ capabilities and robustness while executing tasks in the real world. We present results on the integration of vision, haptic, force and the physical capabilities of the robot to allow discovery, segmentation, visual learning and grasping of unknown objects. Further, we present a novel approach for local implicit shape estimation which allows reconstructing local object details such as edges and corners based on sparse data. To acquire the information about contact normals and surface orientation during haptic exploration, we present a novel IMU-based haptic sensor which self-aligns with the contact surface and allows direct measurement of surface normals from a single contact between the fingertip and the object.

Patrick van der Smagt is head of AI Research at Volkswagen Group Data Lab in Munich, focussing on deep learning for robotics. He previously directed a joint lab on biomimetic robotics and machine learning as professor for computer science at TUM and head of the ML lab at fortiss Munich. Besides publishing numerous papers and patents on machine learning, robotics, and motor control, he has won various awards, including the the 2014 King-Sun Fu Memorial Award, the 2013 Helmholtz-Association Erwin Schrödinger Award, and the 2013 Harvard Medical School/MGH Martin Research Prize. He is founding chairman of a non-for-profit organisation for Assistive Robotics for tetraplegics and co-founder of various companies.

Patrick van der Smagt is head of AI Research at Volkswagen Group Data Lab in Munich, focussing on deep learning for robotics. He previously directed a joint lab on biomimetic robotics and machine learning as professor for computer science at TUM and head of the ML lab at fortiss Munich. Besides publishing numerous papers and patents on machine learning, robotics, and motor control, he has won various awards, including the the 2014 King-Sun Fu Memorial Award, the 2013 Helmholtz-Association Erwin Schrödinger Award, and the 2013 Harvard Medical School/MGH Martin Research Prize. He is founding chairman of a non-for-profit organisation for Assistive Robotics for tetraplegics and co-founder of various companies.

Title:

When using tactile sensor data in manipulation tasks, supervised learning only goes so far. We focus on unsupervised methodologies instead: based on variational inference, and implemented in deep neural networks, we have developed a framework for creating physically interpretable latent spaces from non-Markovian state spaces. In my presentation I will demonstrate this based on different tactile sensors, and show how the latent space can be used in manipulation and control.

Title: