Universität Bielefeld › Technische Fakultät › NI

Search

Online 3D Scene Segmentation

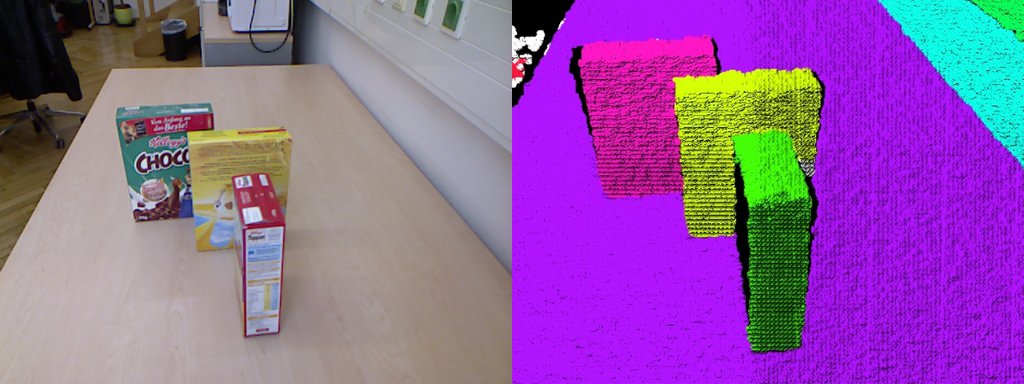

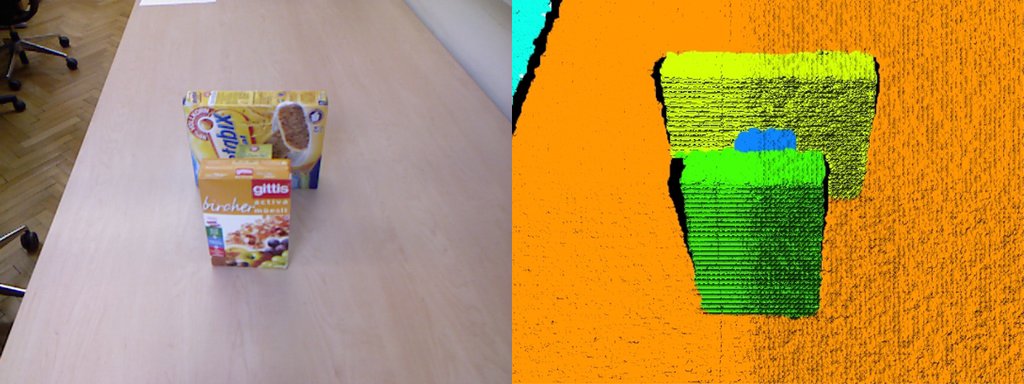

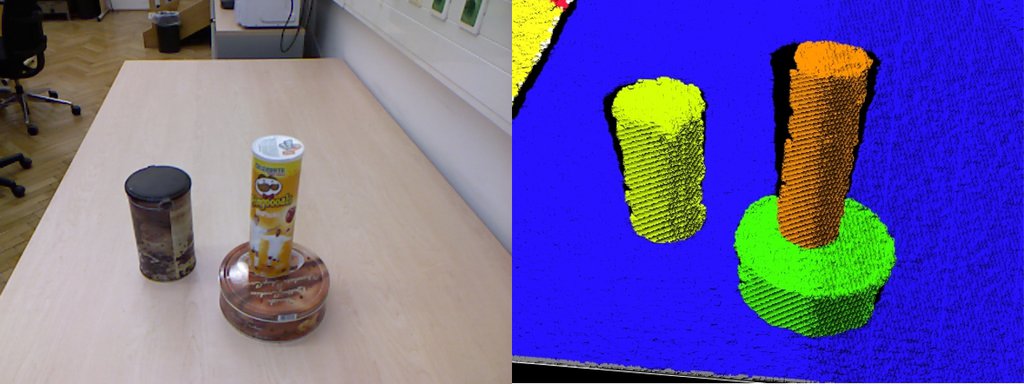

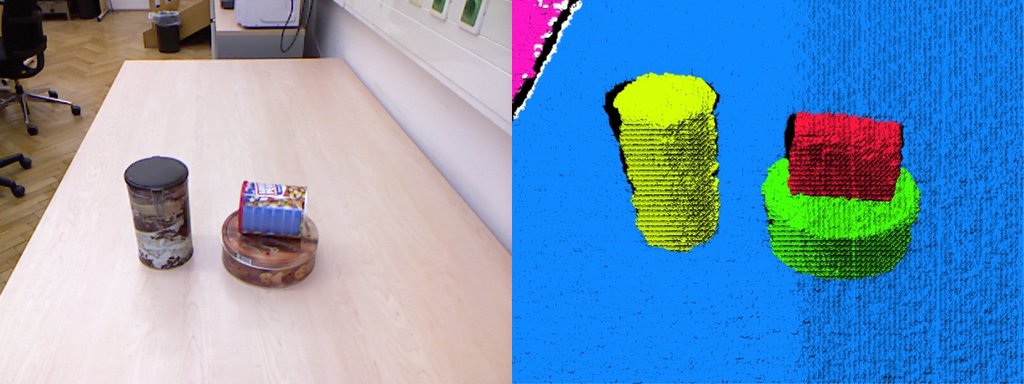

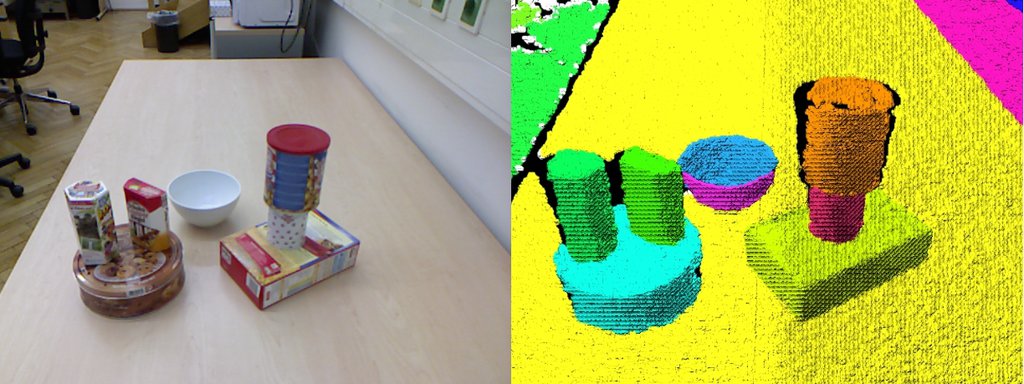

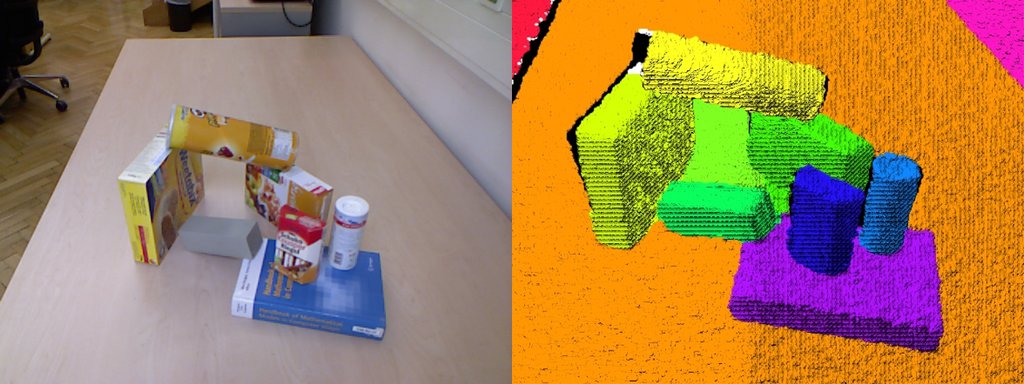

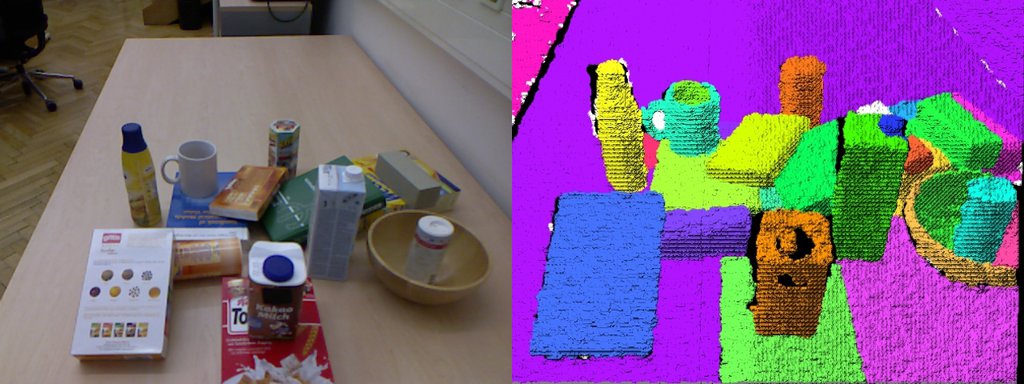

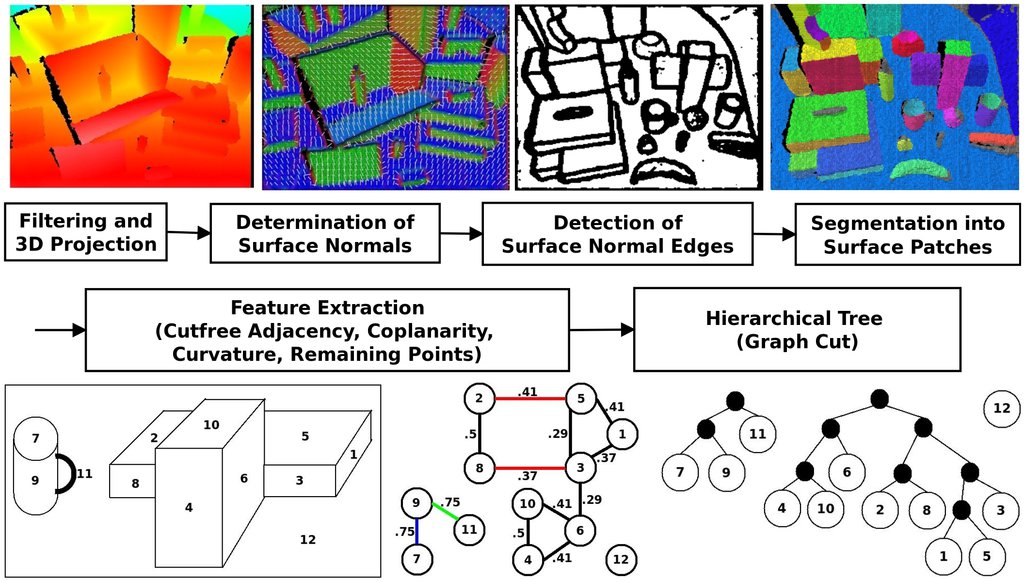

A major pre-requisite for many robotics tasks is to identify and localize objects within scenes. Our model-free approaches to scene segmentation employs RGBD cameras to segmented highly cluttered scenes in real-time (30 Hz). To this end, we first identify smooth object surfaces and subsequently combine them to form object hypotheses employing basic heuristics such has convexity, shape alignment and color similarity.

The model-free approach allows to recognize unknown objects as well, which is in contrast to many other state-of-the-art approaches. As can be seen from the results shown below (evaluated on the Object Segmentation Database) we can handle very complex scenes with stacked and partially occluded objects.

An extension to the real-time scene segmentation approach generates a complete hierarchy of segmentation hypotheses. An object classifier traverses the hypotheses tree in a top-down manner, returning good object hypotheses and thus helping to select the correct level of abstraction for segmentation and avoiding over- and under-segmentation. Combining model-free, bottom- up segmentation results with trained, top-down classification results, our approach improves both classification and segmentation results. It allows for identification of object parts and complete objects (e.g. a mug composed from the handle and its inner and outer surfaces) in a uniform and scalable framework.

The segmentation algorithm can also be used to identify basic pointing gestures, such that the human operator can guide the robot's attention to specific objects in the scene. To this end, we evaluate both, the pointing gesture itself and the location of object candidates in the scene, to achieve more robust recognition results.