Universität Bielefeld › Technische Fakultät › NI

Search

Multimodal times series modeling and segmentation

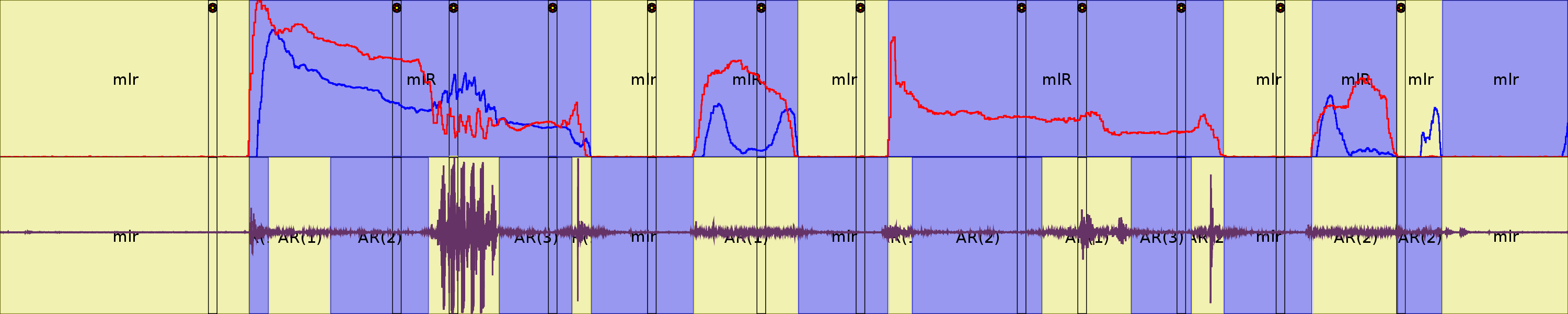

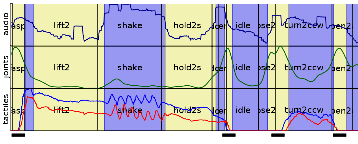

In this project we investigate approaches for unsupervised segmentation of interaction sequences based on multimodal data. The proposed procedure estimates segment borders across all modalities in a single pass. Produced segments can be interpreted as single-handed or bimanual manipulation primitives identified within a continuous sequence. We consider sequences of manipulations applied to an instrumented object by a human subject. These sequences contain bimanual and single-handed object manipulations such as grasping, lifting, putting down, pouring, holding, shaking, screwing and unscrewing the lid. The observation sequences are recorded using a contact microphone, a pair of Immersion CyberGloves and five pressure sensors positioned on the fingertips on each hand. To this data we employ an unsupervised Bayesian segmentation method in conjunction with a product model that combines a set of modality-specific model components for the segment representation. These five simple model components represent: one audio modality, two joint-angles and two force-feedback modalities for the left and for the right hand. Weight parameters control the respective influence of each modality-specific component within the product model.

In this project we investigate approaches for unsupervised segmentation of interaction sequences based on multimodal data. The proposed procedure estimates segment borders across all modalities in a single pass. Produced segments can be interpreted as single-handed or bimanual manipulation primitives identified within a continuous sequence. We consider sequences of manipulations applied to an instrumented object by a human subject. These sequences contain bimanual and single-handed object manipulations such as grasping, lifting, putting down, pouring, holding, shaking, screwing and unscrewing the lid. The observation sequences are recorded using a contact microphone, a pair of Immersion CyberGloves and five pressure sensors positioned on the fingertips on each hand. To this data we employ an unsupervised Bayesian segmentation method in conjunction with a product model that combines a set of modality-specific model components for the segment representation. These five simple model components represent: one audio modality, two joint-angles and two force-feedback modalities for the left and for the right hand. Weight parameters control the respective influence of each modality-specific component within the product model.