Universität Bielefeld › Technische Fakultät › NI

Search

Augmented Reality based Brain-Computer Interfaces

Introduction

Recently Brain-Computer Interfaces have mainly been used as a tool for paralyzed people to communicate with the outside world by spelling letters using only their measurable brain potentials. Naturally this relies on a set of computer generated visual, auditory or tactile stimuli which serve as items to communicate the subjects intentions.

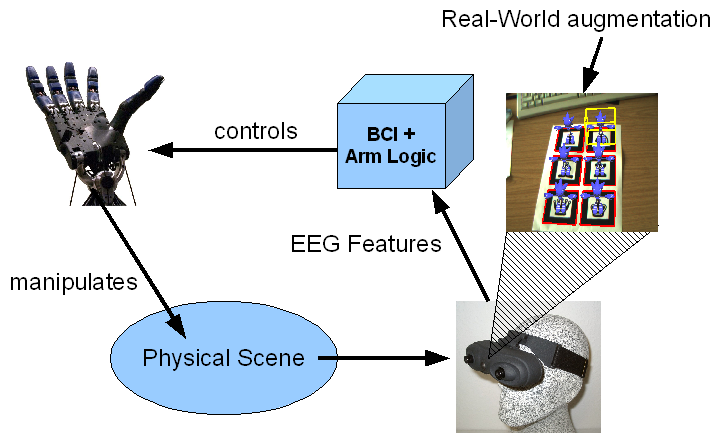

A prime example of this kind is the well known P300-Speller paradigm which lets the subject spell letters by focusing attention on the target letters. Using this paradigm as a basis, we aim to develop a system that treats items from real-world scenes as symbols that carry a specific semantic. These real-world items could consist of a telephone, a bottle or just an abstract entity like a specific location in a room. A system that is able to associate semantics with certain symbols in a (semi-)automatic way will be able to offer a rich set of associated functions which are offered as selectable items to the user. Augmented-Reality (AR) techniques are used to augment the real-world items seen through the camera with specific visual stimuli and transform the task into a P300-Speller paradigm. This technique provides the link between physical obejects and computer generated stimuli which is key to the successful application of Brain-Computer Interfaces as assitive device for completely paralyzed people.

Increasing Flexibility

To increase the applicability of BCI in every day usage, we focus on the development and improvement of specific aspects:

- Classification accuracy

- Responsiveness

- Intentional-Control (IC) / No-Control (NC) techniques

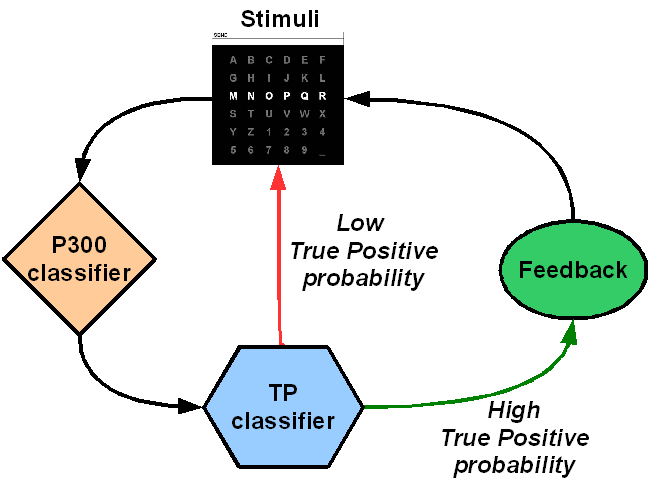

For spelling tasks it is common to define the number of stimulus presentation which results in a pre-defined time span until a classification is possible. We developed an advanced classification method which adapts the amount of data needed to infere the users intention on-line; resulting in faster response times while maintaining high accuracies.